Augury

At its core, Augury records gaze data from XR sessions, visualizing user focus through density maps and gaze plots (heatmaps are a planned feature). But beyond simple tracking, it also features a playback system that allows researchers to rewatch user sessions in VR, with synchronized gaze data overlaid onto the original environment. The tool logs all captured data with precise timestamps, making it easy to integrate external sources such as physiological sensors, interaction logs, or biometric feedback. This means researchers can correlate gaze behavior with additional contextual data, opening new pathways for in-depth XR UX analysis.

While Augury itself is only a prototype, it highlights the potential of gaze-tracking tools in XR UX research. By enabling retrospective session analysis and even the potential of unmoderated user testing in the XR context, it showcases how future tools could help designers, developers, and researchers gain deeper insights into how users perceive and interact with immersive environments. This project seconds the need for continued research and investments in this space to bridge the gap between raw XR data and actionable UX insights.

For more information, you may take a look at my Bachelor Thesis.

My Contributions to this Project

Image Gallery

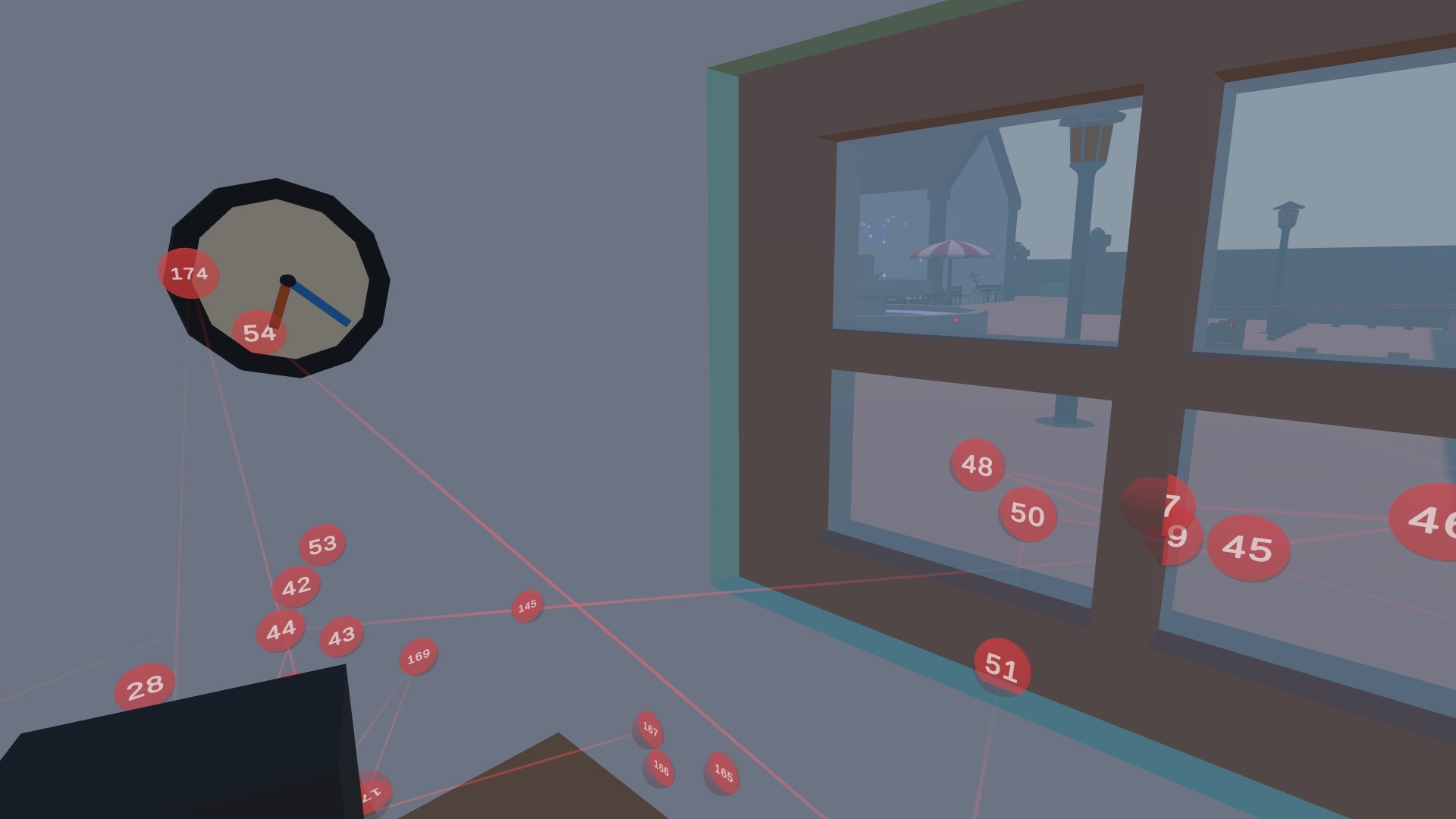

An example of previously recorded gaze data being visualized in VR as spatial gaze plot with red fixation indicators.

Close-up of a single 'fixation' indicator. It is connected to the previous and following 'fixations' through lines in 3D space. Each indicator displays their own unique fixation index to indicate the order of recorded fixation over time.

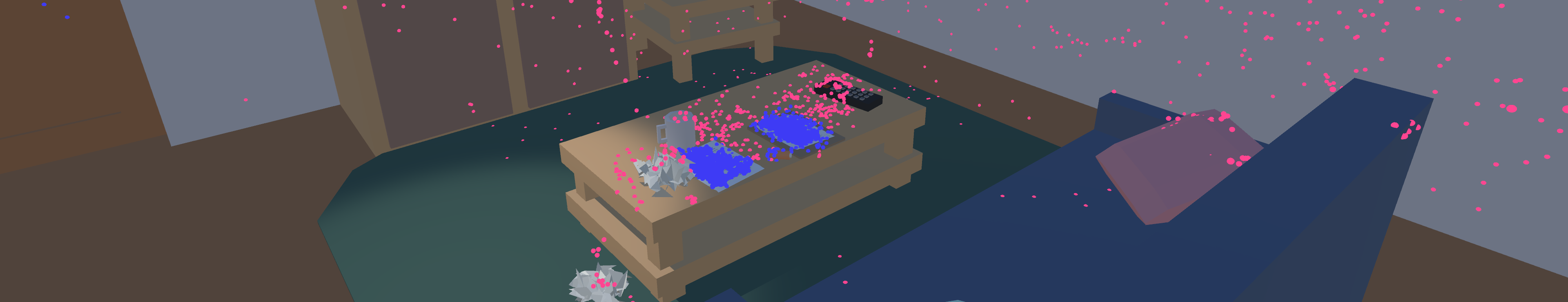

Gaze point data is separated into 'local' and 'global' sets to optimize internal data storage. To distinguish these visually, corresponding GazePoints are represented with 2 different colors.

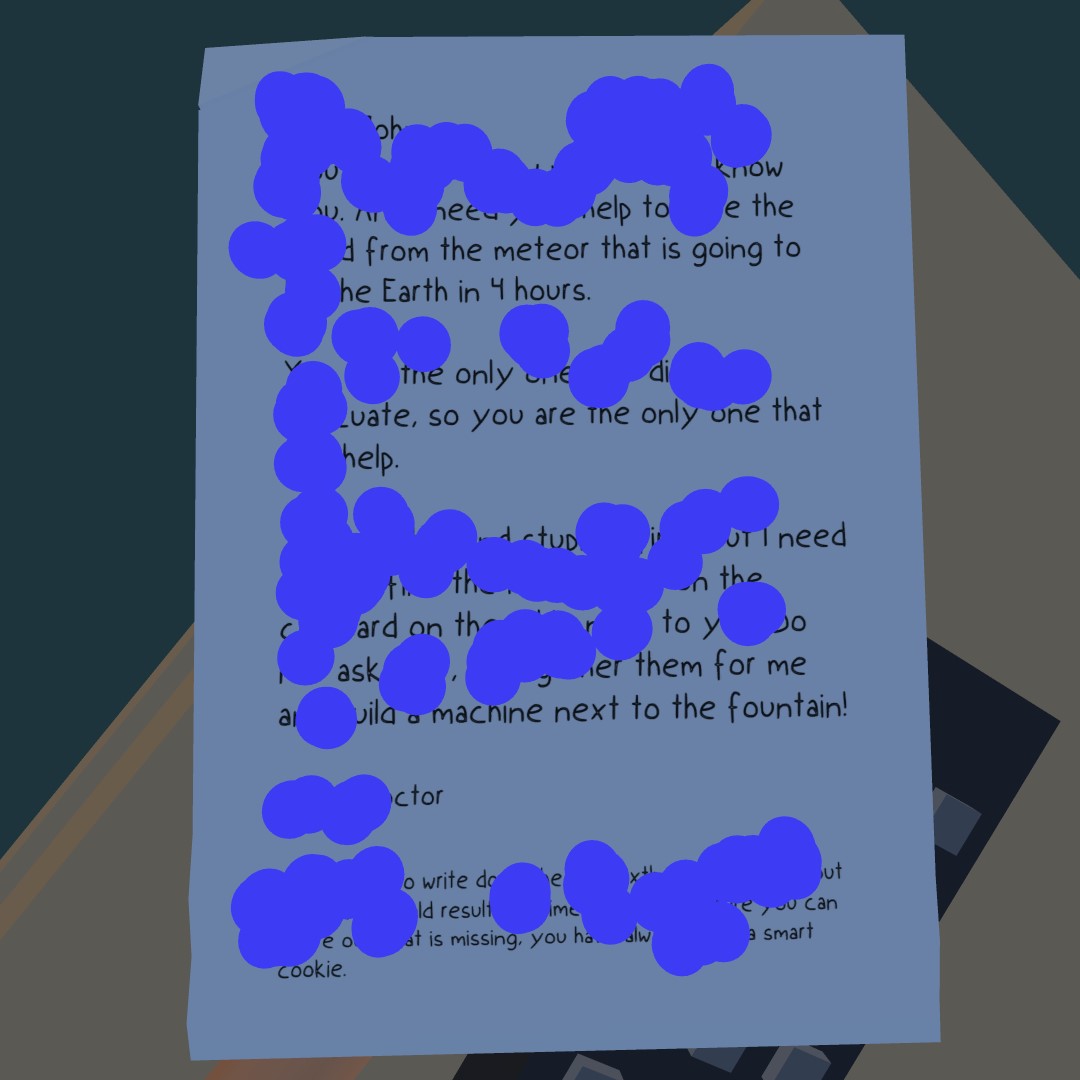

The eye-tracking data can be visualized to identify gaze patterns, showcased here at the example of identifying user reading patterns.

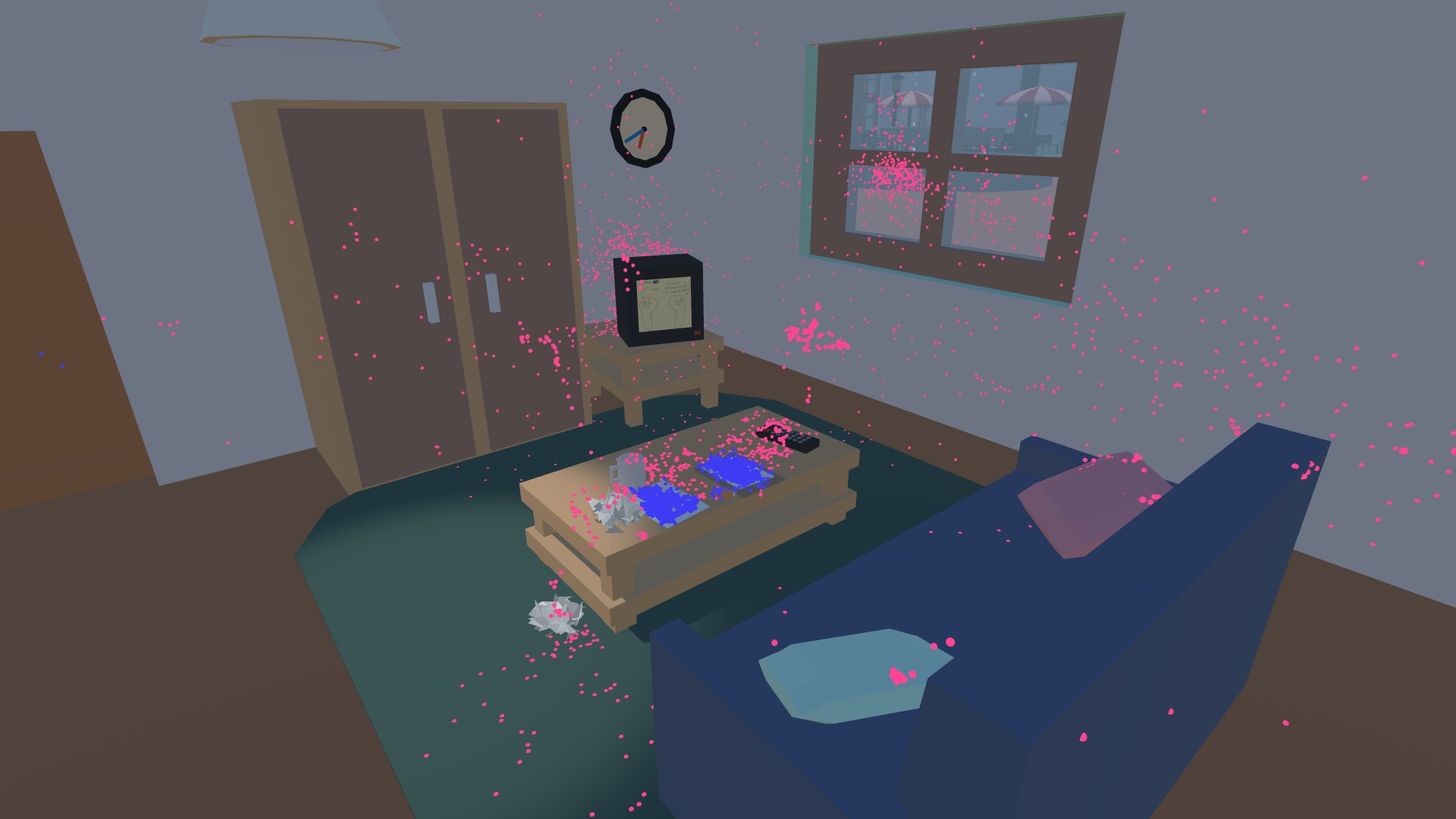

Visualization of gaze data as a density map in VR, highlighting which areas were focused primarily by the user.

Top-down view of a density map visualizing which areas in the VR scene were focused primarily the user.